3.4.2026

min read

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

In the coming months, Google plans to deprecate several models across its Gemini family, including versions from the 2.0 and 2.5 lines such as Flash and Flash-Lite. For developers experimenting with AI, this kind of model lifecycle change is part of the normal pace of innovation. But for teams running LLMs in production, upcoming deprecations highlight a deeper challenge: the models your systems rely on today may change, or disappear, sooner than expected.

If you run AI in production, you already know the pattern: you finally stabilize your pipeline, tune prompts, validate outputs, and lock in predictable costs. Then a provider announces a new model release. Overnight, assumptions shift, outputs change, and the system you optimized for months behaves differently.

That’s why recent Gemini update matter — not just for Google users, but for anyone managing LLM model changes in production. Google’s ongoing Gemini updates illustrate a broader reality across LLM providers, where architectural, behavioral, and pricing changes affect production AI workflows.

The issue isn’t whether newer models are more capable, but rather what those changes mean once AI is embedded in production systems that depend on consistency.

Newer does not automatically mean better for deployed systems. A more capable model may produce different structured outputs, respond with altered formatting, or introduce latency shifts that break timing assumptions. Prompt strategies that performed reliably last quarter can suddenly degrade, forcing teams to revisit logic they thought was finalized.

Even when improvements are real, they rarely function as drop-in replacements. Production systems depend on consistency. When model behavior changes, even slightly, downstream processes can fail in subtle ways. Validation rules may stop catching errors. Edge cases resurface. Extraction outputs shift just enough to trigger unexpected bugs.

In practice, this often means teams spend more time maintaining systems than improving them. Engineering time that could go toward shipping new features instead gets redirected toward restoring stability.

Behind every model upgrade is operational strain. Engineering teams rerun evaluations, rewrite prompts, adjust validation rules, and conduct regression testing just to maintain prior performance levels. Even when systems appear stable, silent accuracy regressions or new hallucination patterns can surface later as downstream failures.

Cost volatility compounds the problem. AI model upgrades frequently introduce pricing increases, and even small per call changes can reshape unit economics at scale. Finance teams see unexpected spikes, product leaders must revisit margins, and forecasting becomes difficult because pricing stability is outside their control.

For small-scale or experimental deployments, these shifts may be manageable. But for teams processing thousands or millions of documents, even minor model changes can create major operational and financial impact.

Tooling complexity also grows with every release cycle. Many organizations end up juggling multiple model versions, fallback logic, and routing rules just to keep systems stable. The result is a fragile architecture that requires constant supervision instead of delivering reliable automation.

This cycle persists because model providers are constantly racing to build better models and push the frontier forward. That innovation pace is great for model capability, but it also means production stability for any single stack isn’t the priority. Deprecations are inevitable, breaking changes are normal, and the engineering burden ultimately lands on the teams deploying AI in production.

The rapid pace of innovation across providers guarantees that today’s cutting edge model will soon be replaced. Organizations that architect their systems around a single version will repeatedly face disruption. Those that build on abstraction layers gain insulation from that churn and the freedom to focus on product value instead of infrastructure maintenance.

The more important question is no longer which model performs best today. It is how to build systems that remain stable as models evolve.

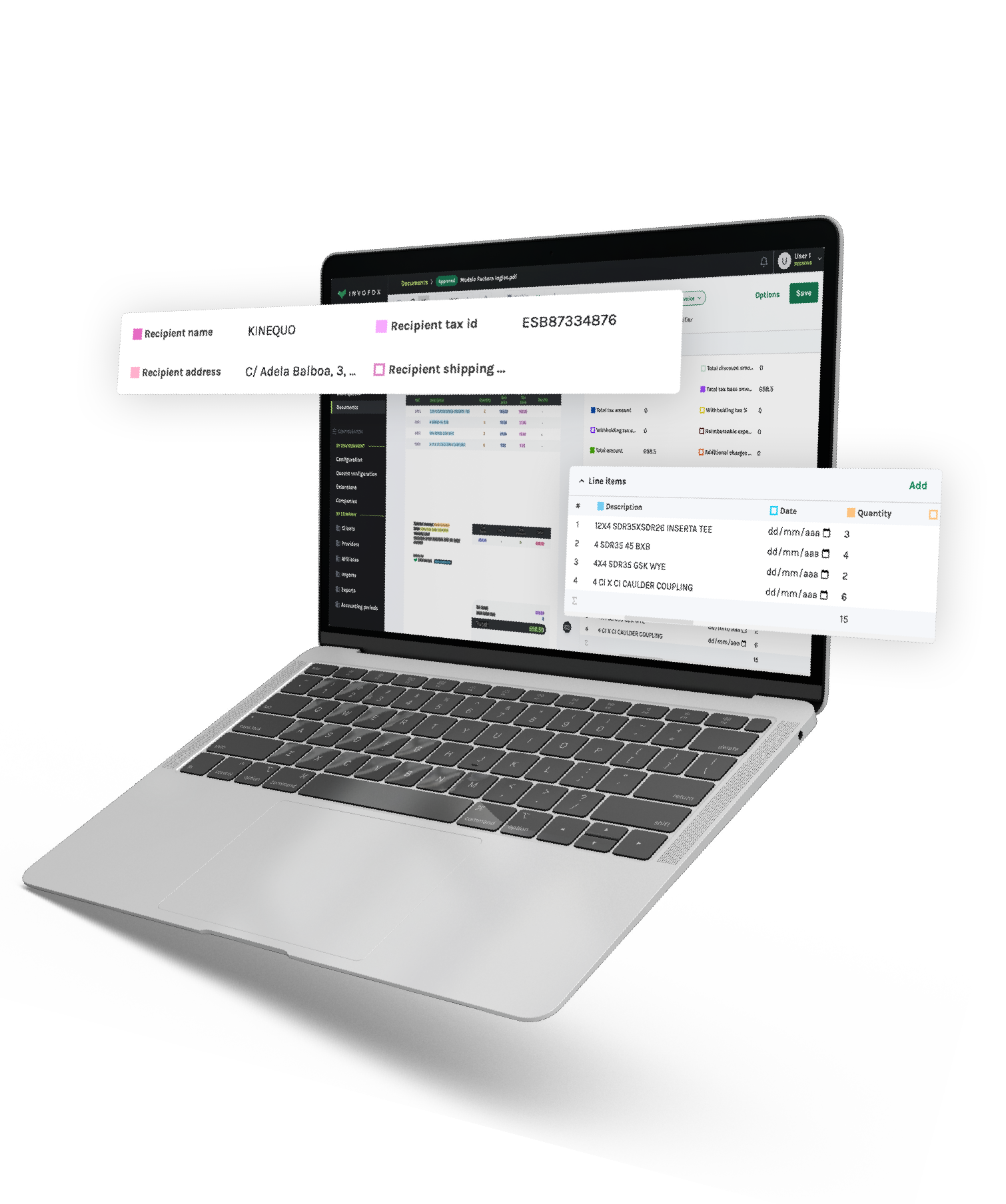

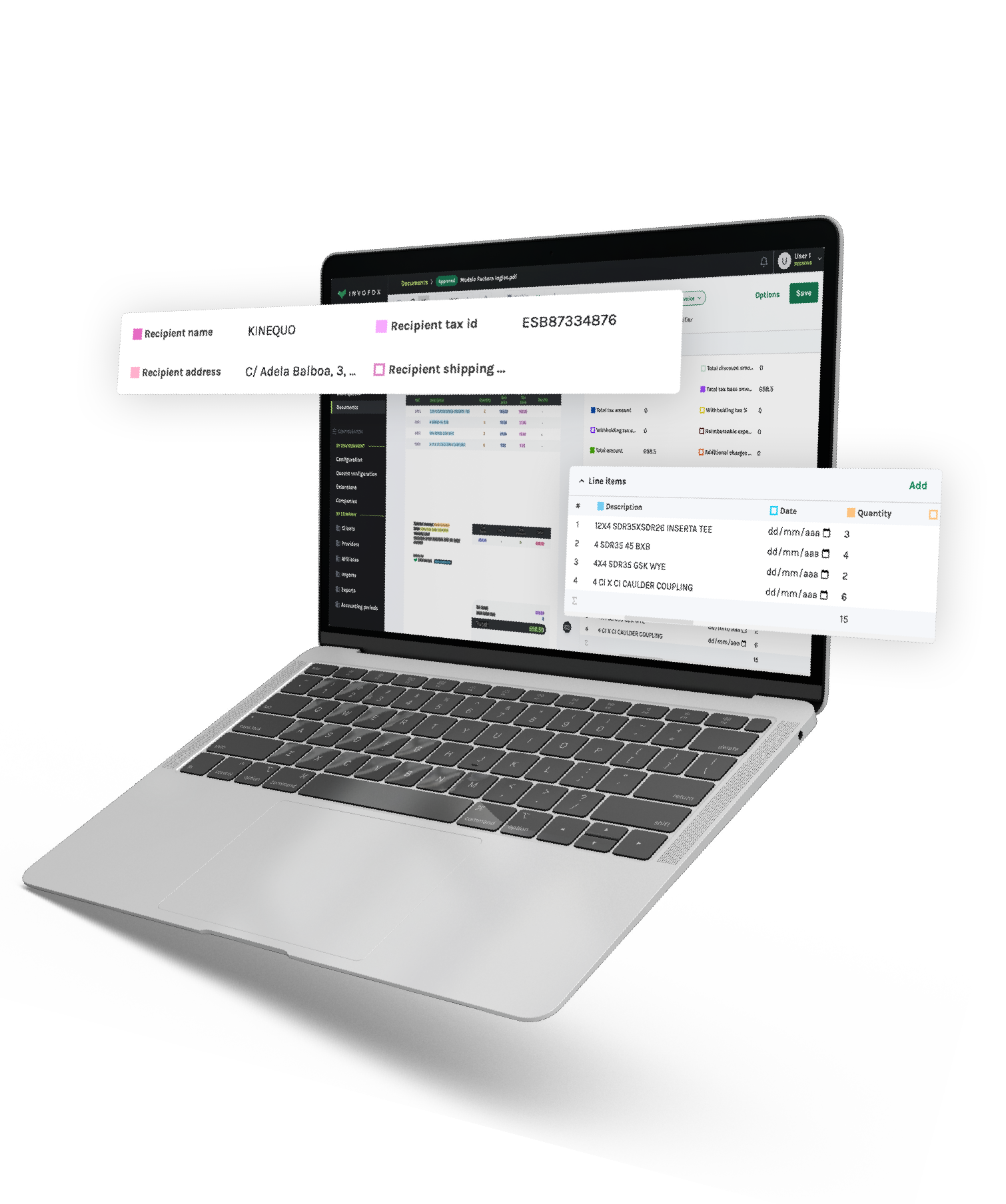

This is where platforms such as Invofox fundamentally change the equation. Instead of reacting to every Gemini update or retraining workflows after each model release, teams interact with a single stable interface while the platform manages model selection, routing, validation, and optimization behind the scenes.

With this approach, organizations do not need to rewrite prompts when models change, rebuild pipelines for new versions, or chase fluctuating LLM costs across providers. One API key connects to a production-ready system where upgrades happen internally and performance remains consistent.

Consider a document processing team that built its workflow on an earlier Gemini model. When a newer version rolls out, extraction outputs shift, validation rules fail, and inference costs rise. Without abstraction, the team spends weeks reworking prompts and logic. With Invofox, the transition happens automatically, with no downtime or emergency fixes.

Stability is not just a technical preference but a business requirement. When outputs fluctuate, customer experiences become inconsistent, analytics lose reliability, and support teams face rising ticket volumes. Production systems depend on predictability, and unpredictability is precisely what frequent AI model upgrades introduce.

Leaders evaluating AI vendors should therefore ask a different question. Instead of which model is strongest today, they should ask which platform will remain dependable tomorrow. Model strength changes monthly, but operational resilience determines long term return on investment. Stability, cost control, and workflow continuity ultimately matter more than benchmark scores.

The broader lesson becomes obvious once you’ve experienced it. LLM model changes are ongoing, and chasing every new release is not sustainable. The teams that succeed in production AI workflows are the ones building on foundations designed to absorb volatility rather than expose it.

The real takeaway from the Gemini update is simple: plan for change or risk disruption.

If you're deploying LLMs in production and want to reduce the operational impact of model churn, book a demo to see how Invofox keeps systems stable while AI keeps evolving.

Head of Product at Invofox (YC S22), where he leads the strategy and development of AI-powered document processing products for software and SaaS companies. He works at the intersection of product, technology and growth, helping teams turn complex documents into reliable, production-ready data.

Subscribe for tips and insights from Invofox — the intelligent document processing (IDP) platform that helps businesses automate invoices, receipts, and more.

3.4.2026

min read

In the coming months, Google plans to deprecate several models across its Gemini family, including versions from the 2.0 and 2.5 lines such as Flash and Flash-Lite. For developers experimenting with AI, this kind of model lifecycle change is part of the normal pace of innovation. But for teams running LLMs in production, upcoming deprecations highlight a deeper challenge: the models your systems rely on today may change, or disappear, sooner than expected.

If you run AI in production, you already know the pattern: you finally stabilize your pipeline, tune prompts, validate outputs, and lock in predictable costs. Then a provider announces a new model release. Overnight, assumptions shift, outputs change, and the system you optimized for months behaves differently.

That’s why recent Gemini update matter — not just for Google users, but for anyone managing LLM model changes in production. Google’s ongoing Gemini updates illustrate a broader reality across LLM providers, where architectural, behavioral, and pricing changes affect production AI workflows.

The issue isn’t whether newer models are more capable, but rather what those changes mean once AI is embedded in production systems that depend on consistency.

Newer does not automatically mean better for deployed systems. A more capable model may produce different structured outputs, respond with altered formatting, or introduce latency shifts that break timing assumptions. Prompt strategies that performed reliably last quarter can suddenly degrade, forcing teams to revisit logic they thought was finalized.

Even when improvements are real, they rarely function as drop-in replacements. Production systems depend on consistency. When model behavior changes, even slightly, downstream processes can fail in subtle ways. Validation rules may stop catching errors. Edge cases resurface. Extraction outputs shift just enough to trigger unexpected bugs.

In practice, this often means teams spend more time maintaining systems than improving them. Engineering time that could go toward shipping new features instead gets redirected toward restoring stability.

Behind every model upgrade is operational strain. Engineering teams rerun evaluations, rewrite prompts, adjust validation rules, and conduct regression testing just to maintain prior performance levels. Even when systems appear stable, silent accuracy regressions or new hallucination patterns can surface later as downstream failures.

Cost volatility compounds the problem. AI model upgrades frequently introduce pricing increases, and even small per call changes can reshape unit economics at scale. Finance teams see unexpected spikes, product leaders must revisit margins, and forecasting becomes difficult because pricing stability is outside their control.

For small-scale or experimental deployments, these shifts may be manageable. But for teams processing thousands or millions of documents, even minor model changes can create major operational and financial impact.

Tooling complexity also grows with every release cycle. Many organizations end up juggling multiple model versions, fallback logic, and routing rules just to keep systems stable. The result is a fragile architecture that requires constant supervision instead of delivering reliable automation.

This cycle persists because model providers are constantly racing to build better models and push the frontier forward. That innovation pace is great for model capability, but it also means production stability for any single stack isn’t the priority. Deprecations are inevitable, breaking changes are normal, and the engineering burden ultimately lands on the teams deploying AI in production.

The rapid pace of innovation across providers guarantees that today’s cutting edge model will soon be replaced. Organizations that architect their systems around a single version will repeatedly face disruption. Those that build on abstraction layers gain insulation from that churn and the freedom to focus on product value instead of infrastructure maintenance.

The more important question is no longer which model performs best today. It is how to build systems that remain stable as models evolve.

This is where platforms such as Invofox fundamentally change the equation. Instead of reacting to every Gemini update or retraining workflows after each model release, teams interact with a single stable interface while the platform manages model selection, routing, validation, and optimization behind the scenes.

With this approach, organizations do not need to rewrite prompts when models change, rebuild pipelines for new versions, or chase fluctuating LLM costs across providers. One API key connects to a production-ready system where upgrades happen internally and performance remains consistent.

Consider a document processing team that built its workflow on an earlier Gemini model. When a newer version rolls out, extraction outputs shift, validation rules fail, and inference costs rise. Without abstraction, the team spends weeks reworking prompts and logic. With Invofox, the transition happens automatically, with no downtime or emergency fixes.

Stability is not just a technical preference but a business requirement. When outputs fluctuate, customer experiences become inconsistent, analytics lose reliability, and support teams face rising ticket volumes. Production systems depend on predictability, and unpredictability is precisely what frequent AI model upgrades introduce.

Leaders evaluating AI vendors should therefore ask a different question. Instead of which model is strongest today, they should ask which platform will remain dependable tomorrow. Model strength changes monthly, but operational resilience determines long term return on investment. Stability, cost control, and workflow continuity ultimately matter more than benchmark scores.

The broader lesson becomes obvious once you’ve experienced it. LLM model changes are ongoing, and chasing every new release is not sustainable. The teams that succeed in production AI workflows are the ones building on foundations designed to absorb volatility rather than expose it.

The real takeaway from the Gemini update is simple: plan for change or risk disruption.

If you're deploying LLMs in production and want to reduce the operational impact of model churn, book a demo to see how Invofox keeps systems stable while AI keeps evolving.

Head of Product at Invofox (YC S22), where he leads the strategy and development of AI-powered document processing products for software and SaaS companies. He works at the intersection of product, technology and growth, helping teams turn complex documents into reliable, production-ready data.

Subscribe for tips and insights from Invofox — the intelligent document processing (IDP) platform that helps businesses automate invoices, receipts, and more.

Used by 150+ companies. We’ll onboard you in 24h.