3.10.2026

min read

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Recently, Invofox CEO Alberto Gimeno participated as a judge at the YC x Google DeepMind Multimodal Frontier Hackathon, an in-person event held in San Francisco that brought together founders, engineers, and researchers working at the cutting edge of multimodal AI.

The event, hosted by Y Combinator in collaboration with Google DeepMind, focused on exploring new types of applications enabled by the latest generation of multimodal AI systems. Participants were encouraged to build products that combine audio, video, and image generation capabilities, moving beyond the traditional “chatbot” paradigm that has dominated much of the current AI landscape.

More details about the event can be found on the official page:

https://events.ycombinator.com/deepmind-march26

As part of the judging process, Alberto joined a group of founders and engineers, including Y Combinator founders and Google DeepMind engineers, to review projects built during the hackathon.

In the initial evaluation round, Alberto reviewed and scored several submitted projects across a number of criteria, including:

As part of the evaluation process, Alberto provided independent scoring and feedback on the projects based on their technical execution, originality, and potential real-world impact.

This early review helped determine which teams would move forward to the final stage of the competition. The final judging panel consisted exclusively of Google engineers, who selected the overall winners from the shortlisted projects.

During the preliminary judging round, Alberto evaluated several emerging ideas exploring new applications of multimodal AI, including:

These projects demonstrated how quickly multimodal AI capabilities are evolving and how developers are experimenting with entirely new categories of software built on top of these models.

Based on the preliminary evaluation process, Solus Forge was selected as a finalist to advance to the final round of judging.

The project stood out for its strong combination of technical feasibility, innovation, and real-world applicability, and was among the top-scoring projects in Alberto’s evaluation.

The hackathon encouraged teams to experiment with advanced multimodal AI technologies developed by Google DeepMind, including:

By building with these tools, participants explored new forms of multimodal applications that combine visual, audio, and language-based reasoning.

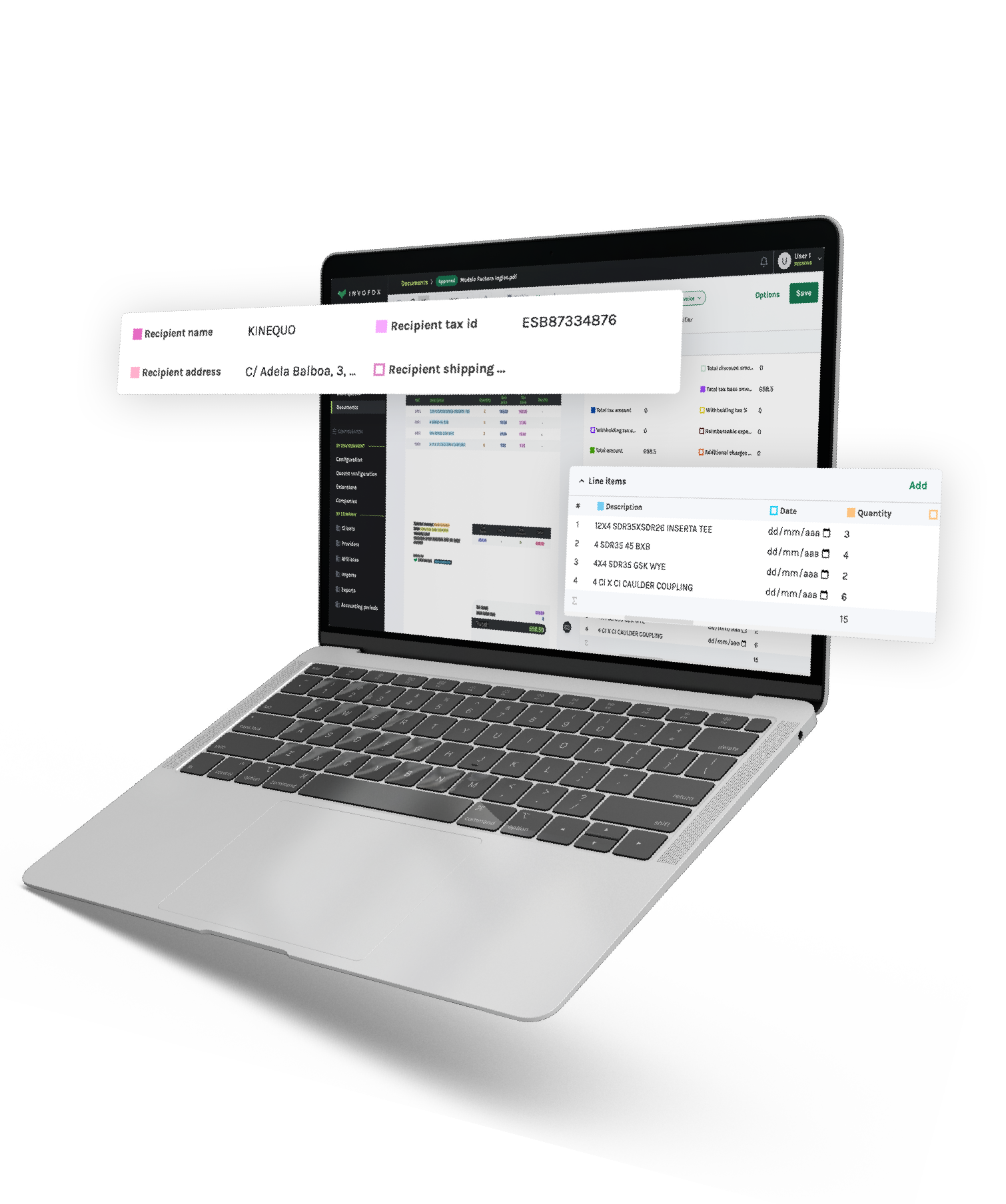

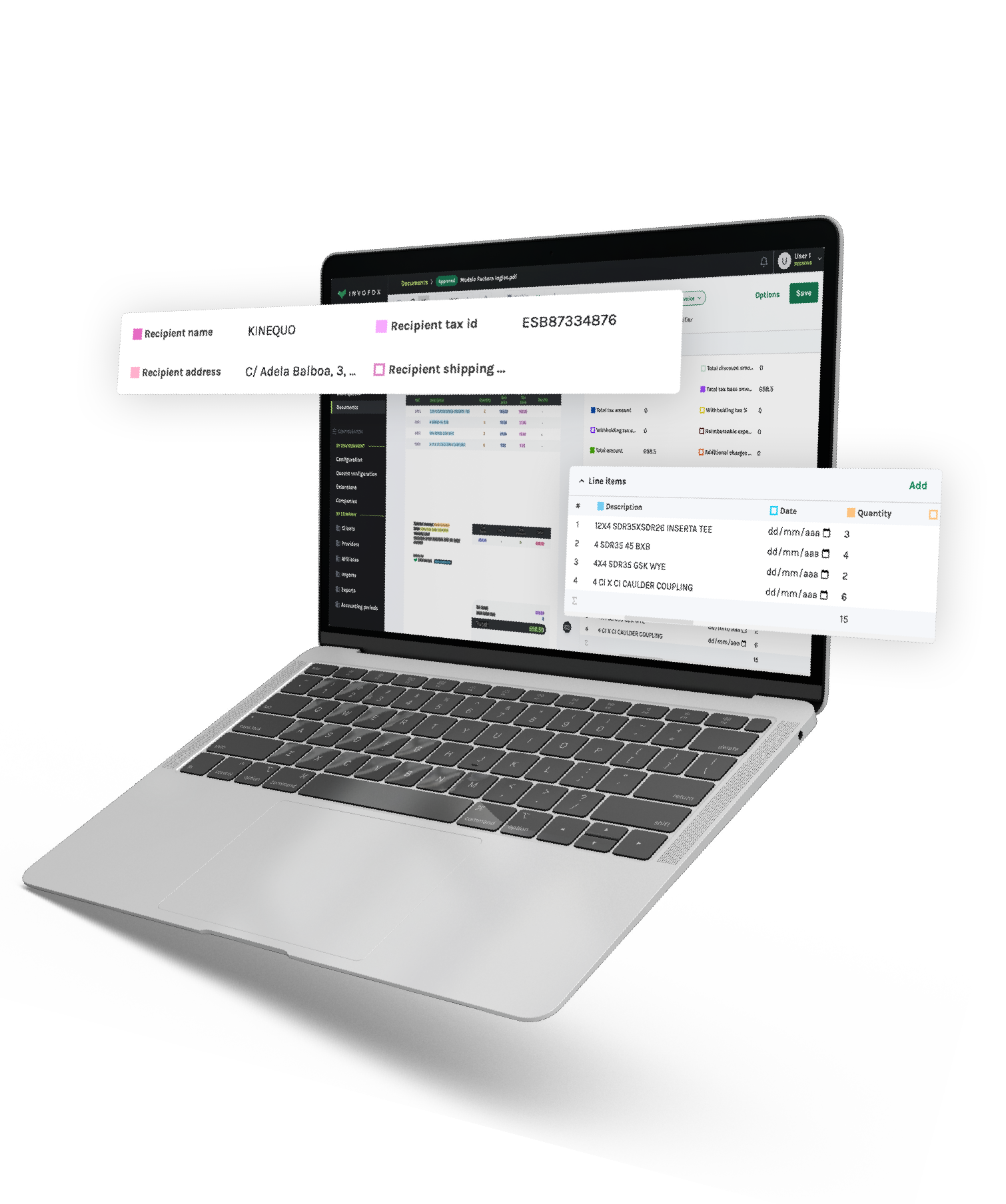

For industry practitioners like Alberto — who works closely with AI systems that extract and structure information from complex documents at Invofox — participating in events like this provides a valuable opportunity to evaluate emerging ideas and contribute expertise to the broader AI ecosystem.

Hackathons like the YC x Google DeepMind Multimodal Frontier Hackathon serve as important environments for experimentation and collaboration between founders, engineers, and researchers.

By bringing together experts from leading organizations and early-stage builders, these events help accelerate the development of new AI applications and highlight the expanding possibilities of multimodal systems.

For Invofox, staying closely connected to these developments helps inform how next-generation AI capabilities may shape the future of document automation and data extraction technologies.

Thuy Vi Nguyen is an Inbound Sales Marketing Specialist at Invofox, where she focuses on growth, demand generation, and go-to-market strategy. She has over a decade of experience in B2B SaaS across marketing, sales, and customer experience, and has led marketing initiatives for multiple technology companies.

Subscribe for tips and insights from Invofox — the intelligent document processing (IDP) platform that helps businesses automate invoices, receipts, and more.

3.10.2026

min read

Recently, Invofox CEO Alberto Gimeno participated as a judge at the YC x Google DeepMind Multimodal Frontier Hackathon, an in-person event held in San Francisco that brought together founders, engineers, and researchers working at the cutting edge of multimodal AI.

The event, hosted by Y Combinator in collaboration with Google DeepMind, focused on exploring new types of applications enabled by the latest generation of multimodal AI systems. Participants were encouraged to build products that combine audio, video, and image generation capabilities, moving beyond the traditional “chatbot” paradigm that has dominated much of the current AI landscape.

More details about the event can be found on the official page:

https://events.ycombinator.com/deepmind-march26

As part of the judging process, Alberto joined a group of founders and engineers, including Y Combinator founders and Google DeepMind engineers, to review projects built during the hackathon.

In the initial evaluation round, Alberto reviewed and scored several submitted projects across a number of criteria, including:

As part of the evaluation process, Alberto provided independent scoring and feedback on the projects based on their technical execution, originality, and potential real-world impact.

This early review helped determine which teams would move forward to the final stage of the competition. The final judging panel consisted exclusively of Google engineers, who selected the overall winners from the shortlisted projects.

During the preliminary judging round, Alberto evaluated several emerging ideas exploring new applications of multimodal AI, including:

These projects demonstrated how quickly multimodal AI capabilities are evolving and how developers are experimenting with entirely new categories of software built on top of these models.

Based on the preliminary evaluation process, Solus Forge was selected as a finalist to advance to the final round of judging.

The project stood out for its strong combination of technical feasibility, innovation, and real-world applicability, and was among the top-scoring projects in Alberto’s evaluation.

The hackathon encouraged teams to experiment with advanced multimodal AI technologies developed by Google DeepMind, including:

By building with these tools, participants explored new forms of multimodal applications that combine visual, audio, and language-based reasoning.

For industry practitioners like Alberto — who works closely with AI systems that extract and structure information from complex documents at Invofox — participating in events like this provides a valuable opportunity to evaluate emerging ideas and contribute expertise to the broader AI ecosystem.

Hackathons like the YC x Google DeepMind Multimodal Frontier Hackathon serve as important environments for experimentation and collaboration between founders, engineers, and researchers.

By bringing together experts from leading organizations and early-stage builders, these events help accelerate the development of new AI applications and highlight the expanding possibilities of multimodal systems.

For Invofox, staying closely connected to these developments helps inform how next-generation AI capabilities may shape the future of document automation and data extraction technologies.

Thuy Vi Nguyen is an Inbound Sales Marketing Specialist at Invofox, where she focuses on growth, demand generation, and go-to-market strategy. She has over a decade of experience in B2B SaaS across marketing, sales, and customer experience, and has led marketing initiatives for multiple technology companies.

Subscribe for tips and insights from Invofox — the intelligent document processing (IDP) platform that helps businesses automate invoices, receipts, and more.

Utilizado por más de 150 empresas.